In the analysis of algorithms, asymptotic notations are used to denote a lower bound on asymptotic time taken by algorithm.

- It analyses the best-case situation of algorithm. It provides a equal or lower limit on the time taken by an algorithm in terms of the size of the input.

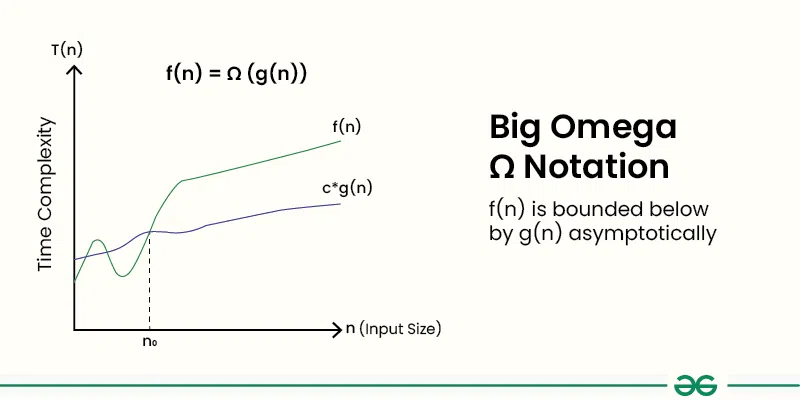

- Definition : Given two functions g(n) and f(n), we say that f(n) = Ω(g(n)), if there exists constants c > 0 and n0 >= 0 such that f(n) >= c*g(n) for all n >= n0. In simpler terms, f(n) is Ω(g(n)) if f(n) will always grow faster than c*g(n) for all n >= n0 where c and n0 are constants.

- We use it when we want to represent that the algorithm will take at least a certain amount of time or space.

- For any algorithm, we can say that its time complexity is Ω(1) as it represents a constant value and in this notation, we only have to guarantee a lower bound. However it is always recommended to find as close lower bound as possible.

How to Determine Big-Omega Ω Notation?

In simple language, Big-Omega Ω notation specifies the asymptotic lower bound for a function f(n). It bounds the growth of the function from below as the input grows infinitely large.

- Break the algorithm into smaller segments such that each segment has a certain runtime complexity.

- Find the number of operations performed for each segment(in terms of the input size) assuming the given input is such that the program takes the least amount of time.

- Add up all the operations and simplify it, let's say it is f(n).

- Remove all the constants and choose the term having the least order or any other function which is always less than f(n) when n tends to infinity.

Example of Big-Omega Ω Notation:

Consider an example to print all the possible pairs of an array. The idea is to run two nested loops to generate all the possible pairs of the given array:

// C++ program for the above approach

#include <bits/stdc++.h>

using namespace std;

// Function to print all possible pairs

int print(int a[], int n)

{

for (int i = 0; i < n; i++) {

for (int j = 0; j < n; j++) {

if (i != j)

cout << a[i] << " " << a[j] << "\n";

}

}

}

// Driver Code

int main()

{

int a[] = { 1, 2, 3 };

int n = sizeof(a) / sizeof(a[0]);

print(a, n);

return 0;

}

import java.util.*;

public class Main {

// Function to print all possible pairs

public static void print(int[] a, int n) {

for (int i = 0; i < n; i++) {

for (int j = 0; j < n; j++) {

if (i!= j)

System.out.println(a[i] + " " + a[j]);

}

}

}

// Driver Code

public static void main(String[] args) {

int[] a = {1, 2, 3};

int n = a.length;

print(a, n);

}

}

def print_pairs(a, n):

for i in range(n):

for j in range(n):

if i!= j:

print(a[i], a[j])

# Driver Code

a = [1, 2, 3]

n = len(a)

print_pairs(a, n)

using System;

public class Program {

// Function to print all possible pairs

public static void PrintPairs(int[] a, int n) {

for (int i = 0; i < n; i++) {

for (int j = 0; j < n; j++) {

if (i!= j)

Console.WriteLine(a[i] + " " + a[j]);

}

}

}

// Driver Code

public static void Main() {

int[] a = {1, 2, 3};

int n = a.Length;

PrintPairs(a, n);

}

}

function printPairs(a, n) {

for (let i = 0; i < n; i++) {

for (let j = 0; j < n; j++) {

if (i!== j)

console.log(a[i] + ''+ a[j]);

}

}

}

// Driver Code

const a = [1, 2, 3];

const n = a.length;

printPairs(a, n);

Output

1 2 1 3 2 1 2 3 3 1 3 2

In this example, it is evident that the print statement gets executed n2 times. When the input range tends to infinity therefore, the best-case running time of this program can be Ω(n2), Ω(log n), Ω(n), Ω(1), or any function g(n) which is less than or equal to n2 when n tends to infinity. However it is recommended to always use the closest lower bound to give a good idea about the time.

Big O vs Big Ω (Omega) vs θ (Theta)

Below is a table comparing Big O notation, Ω (Omega) notation, and θ (Theta) notation:

| Notation | Definition | Explanation |

|---|---|---|

| Big O (O) | f(n) ≤ C * g(n) for all n ≥ n0 | Describes the upper bound of the algorithm's running time. Used most of the time. |

| Ω (Omega) | f(n) ≥ C * g(n) for all n ≥ n0 | Describes the lower bound of the algorithm's running time . Used less |

| θ (Theta) | C1 * g(n) ≤ f(n) ≤ C2 * g(n) for n ≥ n0 | Describes both the upper and lower bounds of the algorithm's running time. Also used a lot more and preferred over Big O if we can find an exact bound. |

In each notation:

- f(n) represents the function being analyzed, typically the algorithm's time complexity.

- g(n) represents a specific function that bounds f(n).

- C, C1, and C2 are constants.

- n0 is the minimum input size beyond which the inequality holds.